Start Moving Data Within the Hour

If you could go back in time, and you wanted to see Einstein cry, ask him to come up with a “Theory of Data Movement” instead of a “Theory of Relativity.”

Moving data between different vendor database platforms is close to impossible.

Every vendor has its load utilities, which takes the equivalent of a Ph.D. to learn how to write even the most simple of load-scripts.

I talked with the most famous data warehouse expert in the world recently. He told me that he was working on a project with Teradata. He said the project was to convert 30 SQL Server tables to Teradata tables. It took him 30-days!

I didn’t have the heart to tell him I could do it within 30-seconds.

It has been my goal to not only master data movement between databases, but to do so at record speed, and to make it so simple that anyone can do it with a few clicks of the mouse.

It has taken us 15-years to make that happen, but now we can move data between database systems in 80 different ways.

I have two visions for data movement:

- Provide the ETL and DBA teams with the ability to move thousands of tables, both small and large, as the lifeblood of the nightly batch process.

- Bringing data movement to the masses so that business users can move data to their sandbox, and perform federated queries.

If you want employees to access any data, at any time, anywhere, you need for each of them to be able to point-and-click and move!

Do you know why the CTO of your company is cursing me out right now?

Because when you give users the power to move data anywhere at the touch of their PC keyboard, the data moves from the source system through their PC and onto the target system. Moving small amounts of data (100,000 rows or less) is not a problem, but move big data, and you are looking at total network saturation.

It would be a huge competitive advantage if you were able to allow your team to move data where they need it, but who can take the risk?

The only solution is two-fold.

- Build the best query and BI tool in the world for the user’s desktop and then allow them to move any data, at any time, anywhere.

- The query tool must then communicate with a powerful server so that the scripts can execute from the server in the best physical location.

Let me introduce you to the Nexus Query Chameleon and the NexusCore Server.

I will demonstrate just how easy yet powerful it is to move data from Teradata directly to Snowflake. In the picture that follows, the user merely needs to right-click on their Nexus system tree, and at the database or schema level, choose “Move Data To.”

From the menu, we have chosen the Teradata system as our source and Snowflake system as our target. That’s pretty easy.

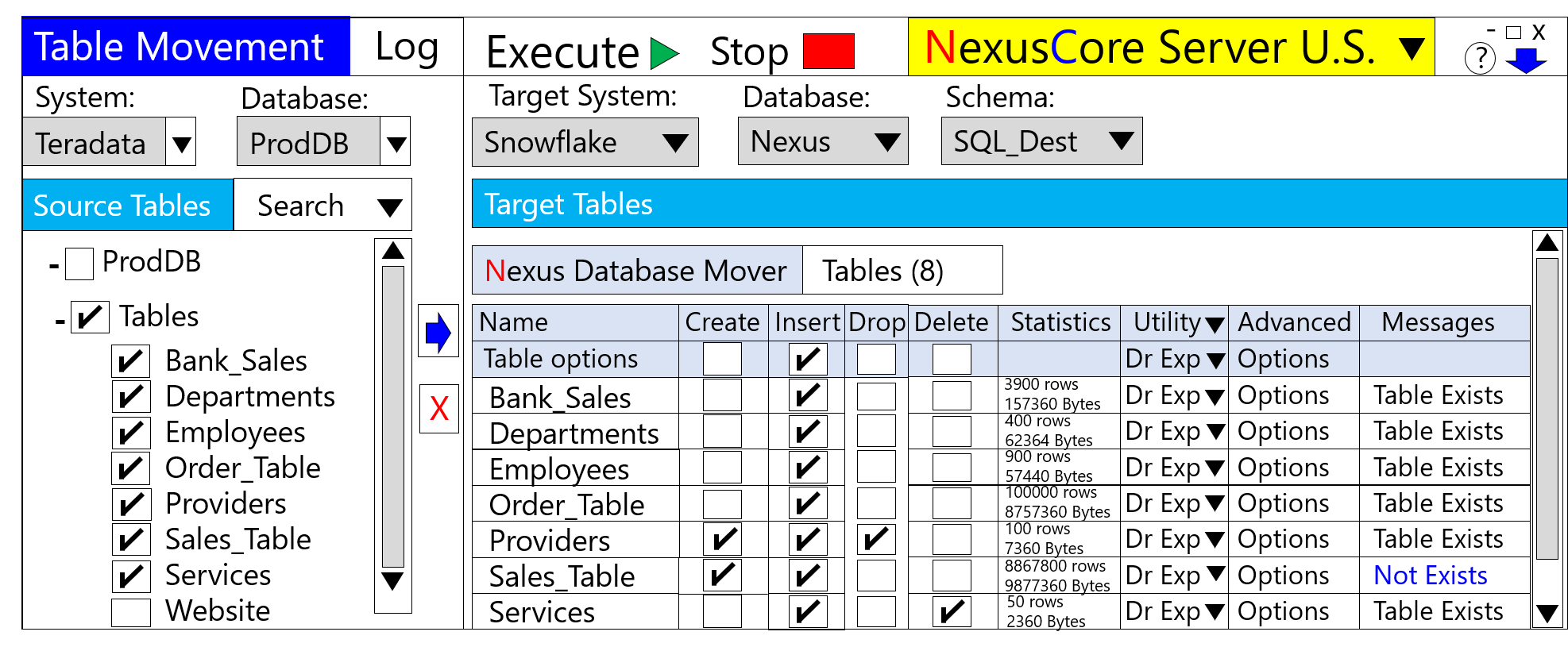

The Nexus Query Chameleon then takes you to the amazing Nexus Database Mover. See the picture below. Choose the target system, schema, and database you for where you want to move the data.

Place a checkmark on the tables you want to move and hit the big blue button. All tables with a checkmark move to the right, and each will move to the target system.

The Nexus automatically converts the table structures and builds the data movement scripts by utilizing each vendor’s load utilities.

Now comes the amazing part!

The user has two options. They can execute the automated data movement scripts from their PC (for smaller data), or they can execute the scripts from any NexusCore Server. The Nexus desktop passes your credentials to the server, and now you are moving data from the source to the server to the target!

No data moves through the local network or anyone’s PC. A message will arrive at your PC, telling you that your data movement job was successful.

It is as if you are sitting at the keyboard of the server! Hey, you can be in two places at one time!

Here are the reasons this technology will be so valuable to your organization:

- You can migrate on-premises data to the cloud instantly.

- You can save a ton of money by using the NexusCore as your ETL tool.

- Users can move data to and from any system into their sandbox.

- You can set up multiple NexusCore Servers in all the locations you have data. You can even set up a NexusCore Server on the cloud.

- Your users can now do federate queries and join data from anywhere.

The Nexus can currently move data between Teradata, Oracle, SQL Server, DB2, Amazon Redshift, Azure SQL Data Warehouse, Netezza, MySQL, Postgres, Greenplum, SAP HANA, and Hadoop. And of course, they all move to Snowflake!

Please give me a call or drop me an email to see a demo. I will help you set everything up and then put the software in your hands for a free trial. I look forward to our partnership.

Sincerely,

Tera-Tom

Tom Coffing

CEO, Coffing Data Warehousing

Direct: 513 300-0341

Website: www.CoffingDW.com

YouTube channel: CoffingDW

Email: Tom.Coffing@CoffingDW.com